Unity Design Update

After meeting with our faculty supervisor, it was brought to attention that our learning environment needed a lot more functionality and design aesthetics incorporated. So after a complete redesign of the module, we’ve come up with a new basis design where the user can interact with the module more than before. This is a small update with more to come soon (future updates includes design of boxes and background, more interactive GUI, and a scene manager for fine tuning from the teacher hub).

VR Learning Environment Components Assembled

Gallery

This gallery contains 3 photos.

This is the progress we have made with testing the compatibility of our headset set up when it’s assembled together. We have been testing and experimenting with different ways to assemble our individual components together for the most reliable data … Continue reading

Emotiv Pro Data successfully imported into Unity

After creating our cortex app using our code as shown here: https://docs.google.com/document/d/1QpwGQAg6NFxkhln0ZBAG9LwZYb0S_Z1LbM6x-n5rSig/edit?usp=sharing

This video shows our imported data into a Unity (game app), It shows us subscribing to our raw EEG data, the motion data, and the performance metrics.

Jumping Scenes Using Collision Boxes

We have been experimenting with different methods for module navigation and decided to work with jump scene events. Using these methods allows for movement between created module scenes with the use of collision boxes. There are two different implementations we are currently using. The first method centers around a panel containing multiple buttons. This has the potential to be used when selecting modules. In other words, when a system has multiple virtual lessons installed on one system, this menu can be used to navigate to the desired module. The second use for the jump scene function is navigation back to the main menu. In order to properly implement this, the same Unity hand models must be used between scenes. Because we are using hand movements to control the game, a menu is hidden on the back of the left hand to prevent cluttering the screen. As shown in the video below, this navigation method gives the user full control scene selection.

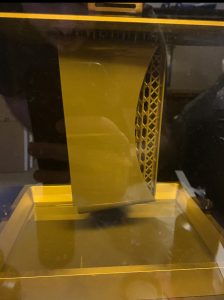

VR headset 3D Print – Model 1

Here’s our first design printed using Stereolithography. A process that uses resin and a UV laser to cure liquid resin into hardened plastic. As you can see this our design using this 3D printing method.

And below, is a photo of our headset with the structural supports taken out and after being cured In +90% IPA.

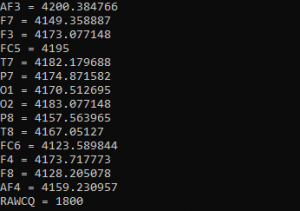

CSV Data Extraction with Java Program Testing

We tested out a potential method for extracting data from the EPOC+. Our primary option involves taking raw data in real time. This would allow for us to provide a real time analysis of physiological state during model use. However, we have not currently obtained clearance to obtain this data from EMOTIV.

To circumvent potential issues, we have decided to work on an alternative method for data extraction. This method can not provide real time analysis, limiting its functionality for our project’s use. If we are unable to obtain or implement real time data extraction, this method can provide a summary of brain signal activity upon completion.

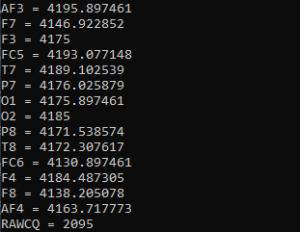

When the EPOC+ extract brainwave data, the results from the recording are stored in a CSV file. Although we are currently unsure how we plan to display this information, we created a Java program using scanners to take the results from each EPOC sensor.

Here is a link to our created code:

https://github.com/Senior-Design-VR-Game/ReadFromCSVPub

The CSV File we use to test this program is from extracted EPOC+ data. The values from the images below correspond to the first two data rows in the provided file.

Interactive Environment

Initial testing on interacting with the environment.

We have the hands moving and spawned in simple objects with collisions applied to them. The hands are then able to grab the objects by using a grasping motion with the fingers onto the collision hitboxes. Objects with no collisions applied to them serve as a platform, as they cannot move.

Welcome!

Updates and progression will be posted here, and other pages will be updated accordingly.